Key Takeaway

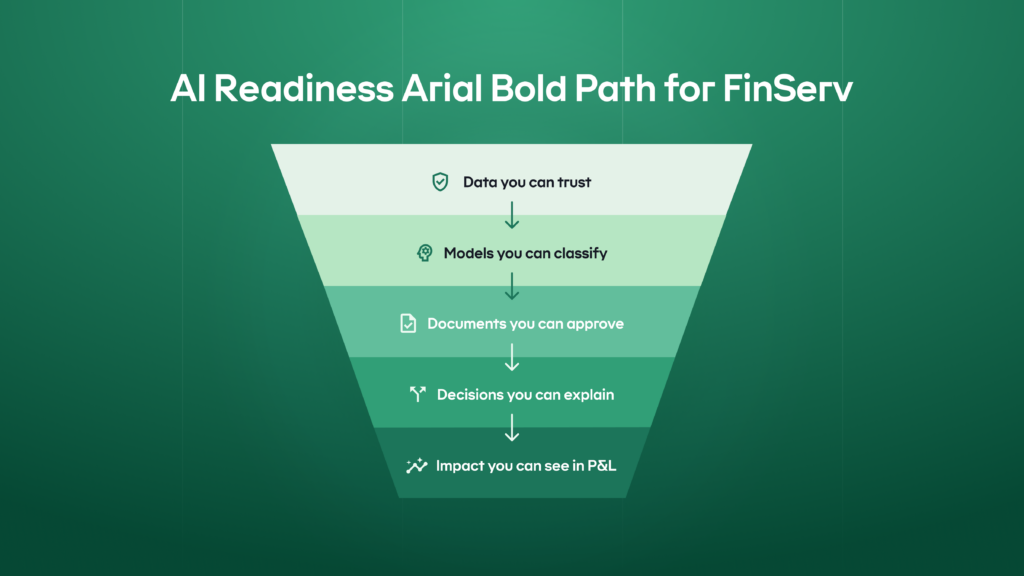

- AI in FinServ is production-ready when governance, data controls, and auditability are designed in from day one and enforced in delivery.

- Real AI readiness means knowing exactly which models run where, on which data, under which controls, with clear ownership and approval flows.

- If AI decisions cannot be explained, reproduced, audited, and rolled back, the system is not safe for regulated workflows.

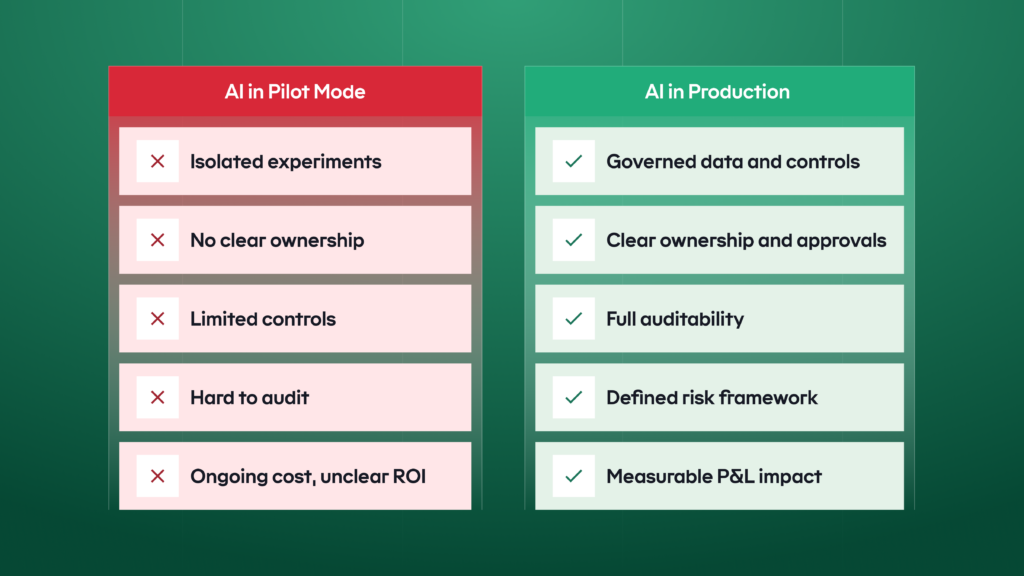

- When AI remains stuck in pilots, organizations carry ongoing delivery costs and unmeasured model and compliance risk.

- AI readiness can be demonstrated through measurable indicators across governance, data, model risk, security, and operations

The gap between early and late AI adopters is widening. Firms that get artificial intelligence into production with proper controls move faster at lower cost, improving ROI. Those stuck in pilot mode carry higher operating expenses and greater model and compliance risk.

This is a checklist for finance leaders who need to see whether AI adoption will translate into measurable P&L impact without increasing controllable risk. It is based on cases from Tier 1 FinServ companies (including Bank of America, Morgan Stanley, and Citibank), US Federal

Reserve guidance, World Economic Forum AI reports, and our own experience in FinServ and AI.

It will help you see where you stand, walk you through key AI readiness indicators common to most FinServ businesses, and help you identify gaps before implementing AI in financial workflows.

The AI-Readiness Checklist

| Control Area | Key Governance Elements |

|---|---|

| 1. KYC/AML Data Lineage and Access | • KYC files, transactions, alerts, and case notes mapped to a system of record with a named owner and data SLAs. • Automated PII detection runs before an LLM processes any data; masking or tokenization is applied by default. • Role-based access control enforces least-privilege access for both users and AI assistants. |

| 2. Model Risk Classification | • Tiered model inventory with clear ownership and scope across production and testing environments. • Risk-aligned testing and approval processes implemented before deployment. • Models, prompts, and key data feeds tracked by version with defined change management and rollback procedures. |

| 3. Regulated Document HITL (Human-in-the-Loop) | • Defined “maker–checker” process for regulated documents with explicit thresholds for AI assistance. • Governed templates with source attribution and policy validation. • End-to-end evidence trail answering who acted, when, and using which data. |

| 4. Fair Lending and Suitability Tests | • Product-level fairness metrics with predefined thresholds and stop/go rules before deployment. • Explainability aligned with regulatory requirements so customer explanations match model logic. • Ongoing monitoring dashboards tracking disparities, overrides, and complaint signals. |

| 5. Weekly P&L Scorecard | • Locked baselines and named metric owners for handling time, loss rate, and conversion before rollout. • Simple formulas with full auditability tied directly to financial system logs and reports. • Budget, rollout, and resourcing adjustments made within the same month based on performance insights. |

The 5-point FinServ AI Readiness Checklist Explained

How artificial intelligence lands in a retail bank, a card issuer, or a wealth manager will differ. But a small set of common building blocks tends to decide whether it works.

The five points below are those blocks – the patterns that recur across most FinServ businesses and the places where value and risk concentrate first when you scale AI.

1.KYC/AML data quality and access

As you bring AI and LLMs into KYC/AML, the financial data they use must be trustworthy and compliant. That means every data point is traceable back to a system of record, personally

identifiable information (PII) is masked or tokenized before any LLM call, and access is controlled through role-based access control (RBAC).

Unauthorized access may lead to a data leak and further penalties in case of public exposure. But eliminating AI from this part of operations affects costs and slows down client verification.

Why AI in KYC matters for revenue

| Outcome | Impact |

|---|---|

| Reworks and case resolution | Clean, well-structured data reduces back-and-forth, shortens KYC/AML investigations, and cuts analyst minutes per case. |

| Breach and penalty exposure | Consistent PII masking, tokenization, and access controls reduce the odds of data misuse, breaches, and the regulatory fines that follow. |

| Smoother regulator reviews | When you can clearly show where data comes from and how it’s used, audits move faster, and remediation efforts stay focused and manageable. |

You can tell if AI is working well in this area by checking several key points like what it takes to retrieve data and if it is properly protected from the wrong access.

What “good” looks like

✓ Authoritative sources with clear lineage and owners.

KYC files, transactions, alerts, and case notes are each mapped to a system of record with a named owner and basic data SLAs (freshness, completeness, availability).

✓ PII protection and minimization before any model call.

Automated PII detection runs before an LLM sees anything; masking or tokenization happens by default. Prompts and templates are designed to pull only the fields the assistant actually needs.

✓ Controlled access and compliant retrieval.

RBAC enforces least-privilege access for users and AI assistants. Queries are restricted to a governed whitelist of records, data residency and lawful basis are checked, and exceptions use a monitored, logged “break-glass” process rather than ad-hoc workarounds.

Getting KYC/AML data lineage and access to this level does two things:

- It reduces avoidable operational and compliance drag today by improving data clarity,

ownership, and auditability. - It creates a safe foundation for AI-assisted investigations and monitoring tomorrow,

enabling automation without compromising regulatory control.

But is it safe enough?

In regulated environments, AI is considered safe only when decisions can be audited, explained, and reversed.

2.Model risk classification

As you scale AI in finance across credit, fraud, and customer decisions, not every use case carries the same level of risk. You need a clear framework to classify each model, align test depth to that risk, and move through an approval path that fits SR 11-7 (the U.S. Federal Reserve’s supervisory guidance on model risk management) and ECB (European Central Bank) expectations.

Clarity in risk structure simplifies and speeds up approvals as well as reduces the probability of governmental fines for biased client-related decisions.

Why classifying risk models matters for revenue

| Outcome | Impact |

|---|---|

| “Surprise” stoppages and rework | When every AI use case is mapped to a risk tier up front, there are fewer last-minute blocks from Model Risk or Compliance, and less budget lost to redesign and re-documentation. |

| Time-to-cash | Pre-agreed approval paths by tier shorten the journey from prototype to production, helping organizations realize cost savings, loss reductions, or revenue lift sooner. |

| Incident and remediation costs | Right-sized controls for higher-risk models reduce the likelihood of major incidents such as fines, revenue holds, forced rollbacks, and expensive remediation programs. |

Given the need to constantly verify whether a model is used correctly and whether it contains no fundamental errors, AI offers an opportunity to establish a way to ensure secure model testing and implementation.

What “good” looks like

✓ Tiered model inventory with clear owners and scope.

A central register lists each AI use case, its purpose, risk tier, P&L owner, key control points, and a change log. Everyone can see what’s in production, what’s being tested, and who is accountable.

✓ Risk-aligned testing and approvals.

Each tier has a defined evaluation pack: accuracy and stability checks, bias/fairness assessment, privacy and PII-leak tests, robustness and scenario runs.

✓ Controlled lifecycle and ongoing monitoring.

Models, prompts, and key data feeds are tracked by version, with a simple change process and a clear way to roll back if something goes wrong. Live AI usage is monitored for performance changes, errors, slow response times, and unexpected

cost. Higher-risk models are reviewed more often than low-risk ones, so the level of control stays in line with the impact they can have.

Putting model risk classification on this footing turns AI from an ad hoc set of

experiments into a governed portfolio of assets. You know which use cases you

have, how risky they are, what evidence they require, and when they need to

be revisited.

But anyway, you cannot entrust all the processes to AI, and here’s why.

3.Regulated document HITL (human-in-the-loop)

As you use AI tools to draft regulated documents such as suspicious activity reports (SARs), adverse-action letters, financial promotions, suitability notes, you can’t let the system auto-send AI outputs. For these outputs, a human reviewer needs to stay firmly in the loop before anything goes outside the bank.

Otherwise, there’s a definite risk of reputational and financial losses due to poor communication or client-related case resolutions.

Why HITL matters for revenue

| Outcome | Impact |

|---|---|

| Fines and costly rework | A clear human sign-off step helps catch regulatory issues before they go out the door, reducing the chance of breaches, redraws, and expensive clean-up exercises. |

| Faster, cleaner throughput | Defined checkpoints and approvers reduce back-and-forth and escalations. Cases move from draft to approved in fewer cycles, with less time lost in email ping-pong. |

| Remediation costs | Fewer inappropriate or incorrect communications mean fewer complaints, make-good credits, and remediation programs that erode margin and trust. |

Regulated documentation for FinServ requires double attention, but it does not mean that everything should be built around the goal of avoiding costly mistakes. Suffice to establish a system that will work through the most important pain points.

What “good” looks like

✓ Explicit checkpoints with owners and SLAs.

A clear “maker – checker” flow for regulated documents, with named approvers, segregation of duties, and time targets. The scope is written down: exactly which documents require HITL, at what thresholds, and when AI is allowed to assist only with

drafts.

✓ Governed templates with sources and policy checks.

Standard templates that auto-pull the required data fields, reference systems of record, enforce redaction rules, and run compliance validations (forbidden phrases, claims, or disclosures) before anything is submitted for approval.

✓ End-to-end evidence trail.

Each item carries a trace ID linking to the prompt, data sources, model and version, edits, and the approver’s decision and rationale. That record is stored immutably according to retention policy and regularly sampled for QA, so you can answer “who did what, when, and based on which data” in seconds, not weeks.

Putting regulated documents under a defined human-in-the-loop (HITL) regime ensures

AI can accelerate drafting while maintaining full control over what ultimately leaves

the organization.

But what’s drafted also needs to be compliant, and this job can be handled by AI.

4. Fair lending and suitability tests

When you use AI for credit decisions, underwriting, or targeted offers, you’re operating in a tightly regulated space. You need to show that your decisions are fair, explainable, and aligned with ECOA (the U.S. law prohibiting discrimination in credit) and Regulation B (the detailed rules that implement ECOA), and that you can generate compliant adverse-action reasons every time.

Biased decision-making in this area may lead to a temporary reduction in the client base and to more prolonged reputational damage.

Why fair lending matters for revenue

| Outcome | Impact |

|---|---|

| Penalties and portfolio freezes | Gaps in fair lending controls can lead to fines, restitution, and even constraints on portfolio growth – direct hits to both earnings and strategy. |

| Disputes and rescinds | Clear, consistent explanations for approvals and declines reduce complaints, disputes, and the time spent reworking or rescinding decisions. |

| Safer approval box | When you can quantify and control bias, you can safely widen approval criteria, growing balances and revenue without increasing legal or regulatory risk. |

AI that can make unbiased, explainable, and well-documented financial decisions makes the company much better protected from lengthy regulatory inspections and reduces customer complaint volume.

What “good” looks like

✓ Defined fairness tests and thresholds.

Each product has documented metrics, including protected-class proxies where appropriate. Thresholds and stop/go rules are agreed in advance and applied before any model or policy change goes live.

✓ Explainability with compliant reasons.

Every decision capture human-readable key factor and maps them to an approved list of adverse-action reasons. What you tell the customer and regulator matches what the model actually used – no “black box” surprises.

✓ Ongoing monitoring and remediation.

Production dashboards track disparities, overrides, and complaints over time. You run periodic back-testing and champion/challenger comparisons, and you have a playbook to adjust cutoffs, features, or policies when drift or disparity breaches your agreed limits.

Embedding fair lending and suitability tests at this level turns AI-driven credit

and offer strategies into assets you can defend to regulators, to customers,

and to your own risk committee.

But how to check if there’s real progress in all that AI adoption?

5.Weekly P&L scorecard

To keep your artificial intelligence honest, you need to see the impact of AI and automation in your P&L (profit and loss), not just in slideware. A simple weekly scorecard for the executive leadership team and the finance team with a few hard numbers is enough to decide whether an AI use case should stop, be fixed, or be scaled.

Why regular P&L checks matter for revenue

| Outcome | Impact |

|---|---|

| Turning AI into booked savings | Minutes saved and loss reduction are translated into specific amounts of money, so they can be included in planning and defended in forecast meetings. |

| Killing “pilot theater” quickly | If adoption is low or performance improvements are weak, it shows up in real time. That triggers a stop-or-fix decision instead of letting experiments drag on for quarters. |

| A real operating cadence | Making a decision every 30 days—stop, fix, or scale—compounds impact. Small wins add up, and underperforming initiatives don’t soak up budget and attention. |

When you assess the real impact of AI in your organization, make sure that everyone knows the final goal – to improve operations and financial outcomes.

What “good” looks like

✓ Locked baselines and clear metric owners.

Before rollout, you capture baselines for handling time, loss rate, and conversion. Each of the four numbers on the one-pager – minutes saved (translated to specific money amounts), loss in bps (basis points) vs. baseline, conversion/activation lift, and adoption % – has a named owner.

✓ Simple formulas and full auditability.

Minutes saved are calculated as: volume × time saved × fully-loaded $/minute. Loss deltas are shown in basis points with the underlying exposure. Every figure traces back to system logs or reports, so Finance, Risk, and Audit can reconcile the numbers.

✓ When a gate is hit, you actually move.

Budgets, rollout plans, and resourcing are adjusted in the same month – not “sometime later.”

With a weekly P&L scorecard in place, AI stops being a fuzzy innovation topic

and becomes a managed performance lever.

Conclusion

AI readiness is already a profit-and-loss question for financial services, which is why teams need to be ready for AI, not treat it as a lab experiment or a “nice to have” side project.

The FinServ players pulling ahead aren’t just “trying AI,” they are executing structured AI integration. They’re wiring it into governed data, model risk, regulated documents, and fairness and suitability controls. That’s why the most effective AI programs are increasingly sponsored

by CFOs and CROs, not just CTOs: the conversation has moved from technology curiosity to cost, loss, and revenue impact.

If you’re a FinServ organization looking to adopt AI – or to test whether your current efforts will actually pay off – Revenue Grid experts can help from the very start. The company’s programmable Assistant is built to sit inside your existing workflows and to handle regulated data with full auditability.

It runs on your existing datasets, in your existing cloud (AWS, Azure), and respects enterprise controls by design. In practice, that gives you a specific way to apply the checklist above and turn AI from a slide in the innovation deck into a controlled, measurable driver of P&L.

What evidence does an audit need to approve an LLM use case in FinServ?

Audit requires a documented model inventory, clear ownership, and end-to-end traceability of AI decisions. This includes logs of inputs, outputs, approvals, overrides, and version changes. The organization must be able to reconstruct any material AI-assisted decision after the fact.

How do regulators assess whether AI is safe to use in regulated workflows?

Regulators focus on governance maturity rather than model sophistication. They expect clear risk classification, explainability, monitoring, and the ability to intervene or stop the system. AI use cases that cannot be reviewed or rolled back are treated as high risk.

Is it acceptable to use third-party or open-source models in FinServ?

Yes, but only when deployment, data access, and inference behavior are fully controlled. Models must run in approved environments with enforced data boundaries and clear contractual terms. External APIs without audit-grade controls are typically not acceptable.

What makes an AI pilot different from a production-ready AI system?

Pilots demonstrate feasibility, while production systems enforce accountability. Production readiness requires defined owners, approval workflows, monitoring, and rollback mechanisms. Without these, pilots increase operational and compliance risk over time.

How do you prove which data an AI model is allowed to use?

Through enforced access controls, documented data lineage, and auditable policies governing training and inference. Evidence must show that restricted or sensitive data cannot be accessed implicitly. Logs and access reviews are required to support this.

What level of explainability is expected for AI decisions in FinServ?

FinServ does not require full model transparency, but it does require decision traceability. Teams must explain why a result occurred, what inputs were used, and how the outcome can be challenged. Outputs that cannot be contextualized are rarely acceptable.

Who is accountable for AI decisions made inside business workflows?

Accountability remains with the business owner of the workflow. AI does not transfer responsibility; it introduces another controlled component. Human-in-the-loop or maker checker models are commonly expected.

How often should AI models be reviewed or re-approved?

Reviews should follow a risk-based schedule and occur whenever data, models, or usage change materially. Periodic reassessment is required to detect drift, bias, or control failures. One-time approval is not sufficient.

Can AI be deployed faster without increasing compliance risk?

Yes, when governance and controls are embedded from the start. Defined approval paths and standardized controls reduce friction later. Speed comes from structure, not from bypassing safeguards.

What are the key steps in preparing a financial services company for AI integration?

Start by auditing data quality, accessibility, and governance to ensure AI models can run on clean, compliant, well-structured datasets. Align use cases with measurable business outcomes such as risk reduction, revenue growth, or cost efficiency, then validate them through controlled pilots with clear KPIs. Finally, establish strong model governance, security, and regulatory controls so AI can move from experimentation into production without increasing compliance or operational risk.